Opinion: The Crime of AI Art

AI art software lists itself under the pretext of research to avoid copyright infringement and to protect itself under the fair use doctrine.

AI art generation created with the prompt “the crime of AI art

AI art first started growing a cult following in mid 2022 with software such as MidJourney that ran through the media app Discord. However, it has become a recent phenomenon over TikTok and controversially winning Colorado’s state fair’s fine arts competition.

AI art softwares swarmed the internet with various creators using it to alter pictures of themselves or an image they took. However, AI was not solely being used for altering self taken images; its main use is for creating original art from scratch.

AI art software takes in prompts that a user provides. For example: “Happy person smiling in a beautiful forest in SamDoesArts style”. Once the software processes the prompt, it will instantly scan the internet and find images that align with the user provided prompt and combine them into one AI generated image.

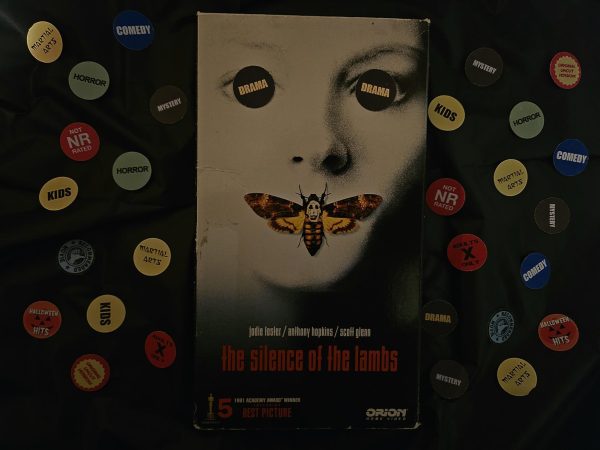

Because of its process, AI art software is incapable of creating art pieces from scratch. The software requires base templates and many thousands of more images to compile an art piece that matches the user provided prompt. Out of the images that AI uses, none of them are opted into the AI’s dataset with consent from the original artists.

Since AI software is not capable of creating a piece on its own, there needs to be a dataset, which is where the primary issue lies: the datasets that AI software uses contains millions of copyrighted images. Every time a user creates a prompt and the AI does not have images in its dataset that matches the prompt, it will pull new images from the internet into the dataset.

AI art software takes thousands of images to compile into one, meaning that the software is infringing on all of the copyrighted images it uses. The AI art software Dall-E is consistently generating 2 million images per day, which means they are infringing upon millions of copyrighted images per day.

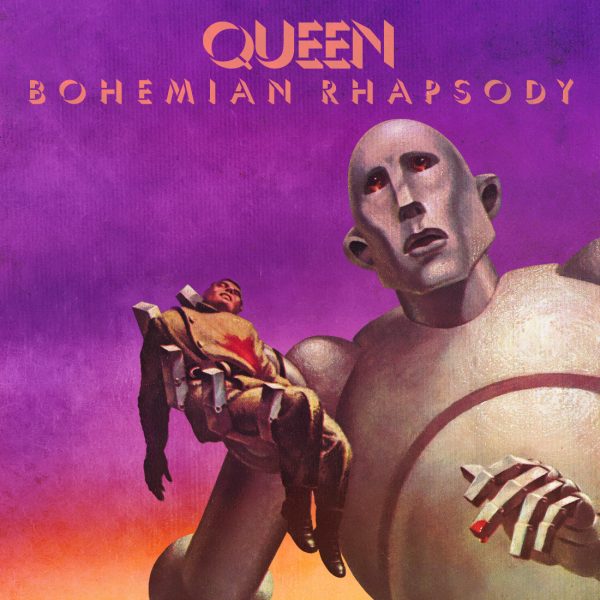

Prompts that users provide to the AI art software may include the names of popular artists. This makes it so that the AI specifically collects images from the dataset from that artist so that it may accurately mimic their style. This is problematic due to the fact that these are copyrighted images.

Self proclaimed “prompt experts” post their AI generated creations online as their own work. Posting copyrighted images online is copyright infringement, however the companies behind the AI prompt software listed in their pretext under the label “research,” this allows them to weave around copyright claims that they otherwise would have received.

The use of copyrighted images without consent in commercialized products is illegal. Though the AI art generators listed their product as research, they’ve quickly turned it into a commercialized product making massive profit off of it, such as the aforementioned software MidJourney.

MidJourney is one of the largest AI art softwares and was used by the infamously famous YouTuber Markiplier. MidJourney AI runs through their discord server and allows “free users” to create five AI generated images without fees, after these five images however, the user must pay a monthly subscription fee.

The subscription fee has three levels, an $8 plan, including being able to create up to two hundred images per month, general commercial terms (meaning that MidJourney won’t copyright claim users that use the AI generated images for commercial use), access to member gallery (dataset), optional credit top ups, three concurrent fast jobs. The $24 plan includes faster AI art generation, unlimited use of the MidJourney AI, and the same benefits as the eight dollar subscription. The $48 subscription doubles the speed of the AI as the previous tier, adds “stealth image creation,” and nine more concurrent fast jobs.

MidJourney is one of the many that claim to be for the sole purpose of research, however Stability AI essentially admitted that AI systems are prone to copyright infringement.

“Because diffusion models are prone to memorization and overfitting, releasing a model trained on copyrighted data could potentially result in legal issues,” Stability AI stated.

If nearly all the datasets AI softwares use are compiled of copyrighted images, why have they not been copyrighted yet? According to the fair use doctrine, fair use of a copyrighted work may be used for purposes such as criticism, comment, news reporting, teaching, scholarship, or research.

So in the end, as long as the AI software “research” companies continue to maintain the label of research, they will fall under fair use, therefore they cannot be copyrighted by any artists that have had their images nonconsensually opted into an AI’s dataset.